Grounded in experience. Focused on clarity.

My Tools

LANGUAGES:

Python · R · SQL

FAVORITE LIBRARIES:

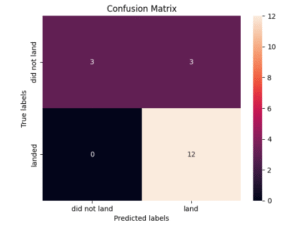

Pandas · Matplotlib · Seaborn · Folium · Scikit-learn

BI & WORKFLOW:

Excel · Tableau · Looker Studio · BigQuery · Google Sheets

AI:

Prompt Engineering · APIs · Predictive Modelling · Compliance